AI Comes Home

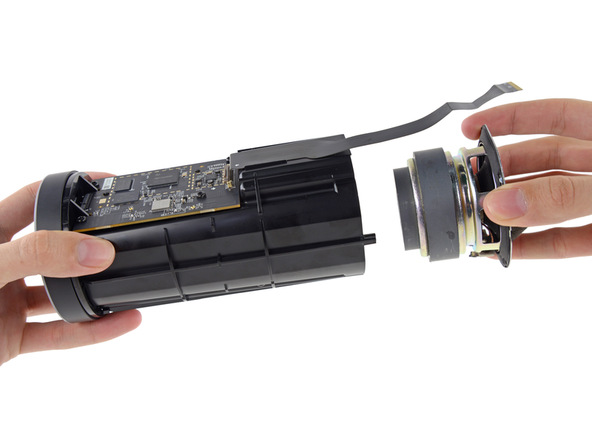

As a designed object, Echo is a black monolith. Like the monolith from 2001, it offers little in the way of visual indicators or feedback. A lit ring at the top of this black cylinder features seven states, ranging from oscillating purple for a Wifi error to a solid red light denoting the microphone is turned off. For most of the time, however, it fluctuates between the active state of lights off and the processing state of swirling blue and cyan. The cyan in this pattern points towards the direction of the speaker, possible through its integration with seven ring mounted microphones. Eschewing traditional anthropomorphic conventions in robotics, Echo comes closest to “HAL” from 2001, in the use of a simple pulsing light—indeed, custom HAL ‘skins’ for the device can already be purchased.

But environment is important. Echo isn’t hardwired into the circuitry of a million dollar spacecraft, nor situated in the minimal office decor of some blue chip corporation. Instead, Echo sits at the heart of your home in the kitchen or living room. This is how David Limp, Amazon senior vice president of devices, presented the device during demonstrations for the press in San Francisco—a wood grain kitchen counter, tasteful appliances, pans and mixers situated behind. Advertisements for the device follow suite, showing a family congregating around a coffee table in the living room.

Echo offers itself as the hub of the smart home, while commercials for the device present it as a focus of family life. For both technical and business reasons, therefore, the device wants to be literally positioned in the center of this domestic space. The design aids in this goal. By relying on voice input via microphones, there’s an implicit understanding that the device needs to audibly capture a maximum sphere within the household. By taking the form of a cylinder, the object indicates its omnidirectional nature, as opposed to the cone-shaped and highly focused nature of many vocal microphones. In other words, in order to hear everything, the Echo must be placed at the center of everything.

Always Listening?

The Echo is always listening. This has led to concerns around eavesdropping and data sharing, exemplified in pieces like the Guardian’s article “Goodbye privacy, hello ‘Alexa’: Amazon Echo, the home robot who hears it all.”[1] (Alexa is the name given to the AI which powers multiple devices: Echo, Dot and Tap). The only problem is, she doesn’t hear it all, in the sense of constantly capturing, storing and parsing audio as information. The device contains a wake word (‘Alexa’ by default), which triggers the device to activate. The audio streamed to Amazon thus begins a “fraction of a second” before the wake word, and ends when the interaction is complete. Amazon seems to have foreseen these privacy concerns, offering a full list of interactions (what Alexa ‘heard’) which can be reviewed and deleted via the smartphone app.

The classic Orwellian critique, then, appears to have largely fallen flat. Bloomberg, for example, states that these criticisms were received on launch. “Some called it a useless gimmick; others pointed to it as evidence of Amazon’s Orwellian tendencies. Then something weird happened: People decided they loved it.” Indeed, one could speculate that the ‘gimmicky’ nature of Echo assisted in undermining any substantial concerns about the device as serious mechanism of surveillance. The device can be extended using Skills from the Amazon Marketplace. Many of these are light, frivolous, facetious—’yo mama’ jokes, fart noises or a chorus of boos voiced for your least favourite family member. In an article for Backchannel, Logan Hill compared the device to a vaudeville routine: “Amazon’s Alexa is a stupendous novelty act that can play thousands of songs, guess your weight, and conjure a pizza from thin air!”[2] Through its spatial positioning and content offerings, Echo bypasses the serious space of business, with its functional demands and ‘real world’ consequences. It steps firmly away from the earnest and towards the amusing, moving out of the office and into the home, out of work mode and into play. Hill thus concludes that “Amazon aimed straight at entertainment, and I think that’s why Alexa is such a success, and the foibles so forgivable.”[3] If data-sharing issues were preempted through technical and design solutions, the ‘all-too-serious’ critique of surveillance is laughed off by an all singing, all dancing assistant for entertainment.

Commercial Ecology

However entertainment and entrainment share the same root. The Echo changes how we live. Despite its unassuming, ‘unfunctional’ approach—or perhaps directly because of it—the device appears to alter the daily behaviour of many of its users. This is not to speak of some Big Brother programme of enslavement but rather to simply acknowledge that the Echo is a particular algorithmic ecology constituted by a particular configuration of hardware and software, agencies and materialities, intentions and specificities. In short, Echo embodies a particular strain of politics, which the user must become attuned to.

This becomes more overt when exploring Amazon’s own examples of how the device is to be used. An early graphic announcing the product listed some common questions which Echo could answer. “Play music by Bruno Mars” indicates a middle-of-the-road, mainstream understanding of culture. Adding “hotel reservations” to a to-do list and “play my dinner party playlist” denote a specific level of financial affluence and social confidence. Perhaps most subtly, “When is Thanksgiving?” casts any concerns about colonization and indigenous peoples aside, conflating the user with an American who doesn’t let messy politics ruin a good holiday. On the Echo homepage, other commands include “playing the Baldwin Bowl playlist” and “playing the new Missy Elliot track”. These aren’t simply random examples but strategic tie-ins which Amazon have arranged as part of their Superbowl advertising campaign for the device. In other words, these commands are not simply technically functional but commercially functional, tapping into the wider Amazon ecosystem and driving sales.

The Echo establishes a commercial ecology navigated by voice. In order to effectively use the device, the user must enact a particular performance carried out through language. In Amazon’s technical guidelines, this means that skills are “a set of sample utterances mapped to intents as part of your custom interaction model.”[4] What a user says is mapped to something which code can do. In practice, this means that users must trigger actions by memorizing and uttering trademarks, brand names, and slogans. “Alexa tell Garageio to close my door.” “Alexa, ask Campbell’s Kitchen for a recipe”. “Alexa, ask Fidelity, how is the NASDAQ?” Like physical directions which reference the closest Walmart or Target, Echo’s users must become familiar with navigating a landscape oriented around major corporations and their associated services. At a minimum, users must invoke the name of a Skill, “start Garageio”. However this is labeled by Amazon as providing ‘no intent’, and the user will be prompted with sample options. A much more fluid experience is therefore obtained when the user utters a “full intent”, recalling and speaking both the app/brand name and a corresponding command fluently.[5] The device thus performs a subtle entraining of who we are and how we should be living.

Technology is Female

The device also embodies a particular notion of what technology is. Or, rather, due to the systematic construction of an identity via voice, who technology should be. What kind of personality should a smart home interface possess? What particular characteristics must a family-centred assistant exhibit? Which human attributes, if any, might a machine retain: race, religion, ancestry, ethics, manners? And how does one emulate a personality without those markers which traditionally code an individual?

First and foremost, technology is a she. Alexa follows in a long genealogy of machines, bots and artificial intelligence agents who have been coded as female. Notable examples include operating system assistants such as Apple’s “Siri” or Window’s “Cortana” (originally appearing in the Halo videogame series) and chatbots like Microsoft’s “Tay” and “Xiaoice”. Even Google Now, an ostensibly genderless voice assistant, began life codenamed “Project Majel”.[6] Majel Barrett acted as a nurse on the original Star Trek series, a role which revolved primarily around her unrequited love for officer Spock. Barrett subsequently became the onboard voice of Federation starships in “Star Trek: The Original Series, Star Trek: The Next Generation, Star Trek: Deep Space Nine, Star Trek: Voyager, and most of the Star Trek movies.”[7] From science-fiction to an explosion of Silicon Valley driven products, “what has traditionally been perceived as female instinct, experience, and voice is artificialized, replicated, and sold.”[8]

Why are these vocal agents so often coded as female? One possible reason is that the ‘warmth’ of the feminine voice is seen as a necessary counter to the ‘cold’ logic of the rest of the system: decision trees, semantic encodings, response times. The ‘heartless’ machine is given an affective interface. “So, more warm, more welcoming, more nurturing, all those associations that are connected with women that are not necessary essential qualities but are socially constructed.”[9] Artificiality is made more palatable through empathy.

Alexa follows this pattern, leveraging the female voice both for cohesion and warmth . The device can learn over 2500+ skills. In terms of production, they range widely in professionalism, time and financial investment, from single developers through to major corporations. In terms of content, they also span an incredible gamut, from blackjack to Norse trivia, from lego to the Bible, from dermatology to aviation.[10] But no matter how uneven or esoteric the Skill is, Alexa speaks them all. The female voice thus performs a vital coherence. An expansive platform is tied together through the consistent intonations of a synthetic yet stable person.

With such expansive content, Alexa must be able to speak it all, so long as it is English: place names, statistics, abbreviations. A text-to-speech engine makes this possible. However text alone does not contain any emotional ‘markup’. In comparison to other methods, like audio books, for example, there is no possibility for lyrical readings, altered pitches, timbre shifts or abrupt volume and speed changes. In the words of Amazon’s technical guidelines, “you cannot control the stress and intonation of the speech (prosody).”[11] Developers may use Speech Synthesis Markup Language (SSML), but this is highly limited. One common use is the <break> tag, specifying a pause in speech. Another common use is the <phoneme> element, specifying a precise pronunciation, as in the song lyrics “you say to-may-to, I say to-mah-to.”[12]

Text-to-speech, therefore, establishes language as a particular set of universal parameters, providing maximum readability but simultaneously negating emotionality. In short, Alexa can say it all, but says it all in the same way. The ostensibly warm female voice is thus seen as a kind of antidote to artificiality. It nudges Alexa out of the uncanny valley, enveloping algorithmic operations in a vocal personality which instrumentalizes feminine stereotypes: affective, emotional, caring, comforting. A recent O’Reilly post on voice interfaces asks the question, “Will your interface be helpful? Optimistic? Pushy? Perky? Snarky? Fun?”[13] For Alexa, the female voice performs a personality in a way that the engine cannot.

What are some other possible reasons for the predominance of female-coded AI? Service is one theory, the roles in which these assistants are often found. Some of the core activities for these agents are helping with queries, noting information and scheduling events. These activities are historically and traditionally associated with woman-dominated roles such as secretary, call-center worker, concierge, and so on. Thus, as Monica Nickelsburg notes, in an industry of flight attendants and travel agents which skew female, both Alaska Airlines and United Airlines chose ‘lady bots’ Jenn and Alex to assist their passengers.[14] Alexa is not overtly coded as a servant, in the way that other digital assistants like “Ask Jeeves” is. She, is, however, “always ready”, available at a mere spoken command, 24 hours a day. Like more contemporary notions of service, such as a PA, office administrator, or PR agent, the relationship is reframed to be one about communication, collaboration, and competence, rather than one of simplistic domination and servility.

Sex is a second reason often speculated for the predominance of female AI. Like the more general feminization of AI, the sexualized and subservient machine/bot has a long genealogy, from the exotic dancer of Fritz Lang’s Metropolis (1927) and Lester del Rey’s short story “Helen O’Loy” of 1938, through to the fembots designed for pleasure of recent science-fiction film Ex Machina (2015). Alexa’s Skills don’t skew explicitly towards this direction, though they do exist. One example is “Hot Girl”, which lets you “talk flirty to the hot girl” and comes with a disclaimer saying that it isn’t suitable for all ages. “Secret Keeper” allows you to share your “deepest secrets”, while “Eliza” is modeled after the famous bot and begins a psychotherapy session. Other skills in this vein include “Pickup Lines” and “Romantic Movie Quotes”, which is triggered with the command “Alexa, start loving me.”[15] These hand-picked examples, however, need to be contextualised in a much greater variety of Skills. Like service, any sexualization of Alexa is subtle—understated rather than explicit, conversation companion rather than obvious gynoid.

Alexa, without even the option to switch ‘genders’, does nothing to disrupt a notion of femininity embedded in the history of technology. Indeed, the device instrumentalizes these associations in subtle ways. On the one hand, she embodies the capable but ultimately subservient assistant. She’s a great listener, thanks to seven microphones and initial vocal training. She’s highly informed, at least about mundane details like the weather. She will execute tasks without requiring niceties like ‘please’ or ‘thank you’.[16] On the other hand, as one blogger quipped, “Alexa isn’t a secretary. She’s a showgirl.”[17] As noted, Alexa isn’t primarily geared to productivity but to entertainment. She will play music on command. She will gladly spout dumb jokes, inane trivia, endless quotes. When her tricks are no longer amusing, users might send her to the Skills database to upgrade from the 2500+ available.[18]

Wired for Speech is a seminal text in the nascent but rapidly expanding field of VUI (vocal user interface) design. Through 10 years research at Stanford, authors Clifford Nass and Scott Brave came to a simple but powerful about HCI and technology-based voices. Even though users cognitively understand the voice is emanating from a machine, our behaviours are not significantly different from those of human-human vocal interactions. Nass and Brave elaborate by stating that, “as a result of these automatic and unconscious social responses to voice technologies, the psychology of interface speech is the psychology of human speech: voice interfaces are intrinsically social interfaces.”[19] What, then, does encoding a subservient secretary/showgirl AI as female suggest? In the first Echo commercial, a family are trying out the device when the wife asks it a question rather loudly. The husband whirls around. “You actually don’t have to yell at it, OK?”, he says patronisingly, “it uses far-field technology so it can hear you anywhere in the room.”[20] It seems that whether human or machine, Amazon continues a technological tradition in which females are treated equally—and equally badly.

[1] https://www.theguardian.com/technology/2015/nov/21/amazon-echo-alexa-home-robot-privacy-cloud

[2] https://backchannel.com/amazon-s-echo-is-the-new-vaudeville-9fd11e80a99#.7eryzt6hl

[3] Ibid.

[4] https://developer.amazon.com/public/solutions/alexa/alexa-skills-kit/docs/alexa-skills-kit-glossary

[5] https://developer.amazon.com/public/solutions/alexa/alexa-skills-kit/docs/alexa-skills-kit-voice-design-best-practices

[7] https://en.wikipedia.org/wiki/Majel_Barrett#Star_Trek

[8] https://newrepublic.com/article/121766/ex-machina-critiques-ways-we-exploit-female-care

[9] http://www.artificialbrain.xyz/242/why-is-artificial-intelligence-female.html

[10] https://github.com/dale3h/alexa-skills-list

[11] https://developer.amazon.com/public/solutions/alexa/alexa-skills-kit/docs/alexa-skills-kit-voice-design-best-practices

[12] https://developer.amazon.com/public/solutions/alexa/alexa-skills-kit/docs/speech-synthesis-markup-language-ssml-reference

[14] http://www.geekwire.com/2016/why-is-ai-female-how-our-ideas-about-sex-and-service-influence-the-personalities-we-give-machines/

[15] https://github.com/dale3h/alexa-skills-list

[16] https://hunterwalk.com/2016/04/06/amazon-echo-is-magical-its-also-turning-my-kid-into-an-asshole/

[17] https://backchannel.com/amazon-s-echo-is-the-new-vaudeville-9fd11e80a99#.7eryzt6hl

[18] https://github.com/dale3h/alexa-skills-list

[19] Clifford Nass and Scott Brave, Wired for Speech: How Voice Activates and Advances the Human-Computer Relationship(Cambridge, Mass: MIT Press, 2005), 4.

[20] Amazon.com LLC. “Introducing Amazon Echo.” YouTube. November 6, 2014. https://www.youtube.com/watch?v=KkOCeAtKHIc.